Some media coverage

Here’s a nice popular summary of the paper that I published with Emil Burman last month on how temperature affects the microbial model community THOR. I think Miles Martin at The Academic Times did a great distilling my ramblings into a coherent story. Good job Miles!

I did not know about The Academic Times before this but will keep an eye on this relatively new publication aiming to popularize and distill scientific content for other scientists.

In other popularization-of-science-news, I got interviewed last week by New Scientist about a very exciting paper that came out this week on travelers picking up antibiotic resistance genes in Africa and Asia. The study was quite similar to what we did back in 2015, but used a much larger data set and uncovered that there are many, many more resistance genes that are enriched after travel than what we found using our more limited dataset. Very cool study, and you can read the New Scientist summary here.

A good journal editor

Since I have previously criticized the practice of uninviting reviewers before the proposed deadline, I just wanted to share a very positive experience on the same theme. Yesterday, I received a very thoughtful message, containing the lines: “(…) Unless you have started your review, I would like to un-invite you from this assignment. You are not late with your review, but I have enough reviews with which to make a decision. Please let me know if you still wish to complete the review. (…)”

This is how easy it is to do a reviewer happy. Had I, for example, read the paper but not finished the report, I would have had the chance to submit it. In this case, other things had come in between and I had not yet started reading the manuscript. Thus, I was happy to pass on this one.

Other journal editors, take note. This is how you avoid pissing off reviewers (and it’s really not that hard).

Published book chapter: Strategies for metagenomic analysis

Last summer, I was approached by Muniyandi Nagarajan to write a book chapter for a book on metagenomics. The book was published earlier this month, and is now available online (1). I have to admit that I have not yet read the entire book, but my own chapter deals with selecting the right tools for metagenomic analysis, and discusses different strategies to perform taxonomic classification, functional analysis, metagenomic assembly, and statistical comparisons between metagenomes (2). The chapter also considers the pros and cons of automated computational “pipelines” for analysis of metagenomic data. While I do not point to a specific set of software that obviously perform better in all situations, I do highlight some analysis strategies that clearly should be avoided. The chapter also suggests a few among the set of robust and well-functioning software tools that, in my opinion, should be used for metagenomic analyses. To some degree, this paper overlaps with the review paper we wrote on using metagenomics to analyze antibiotic resistance genes in various environments, published earlier this year (3), but the discussion in the book chapter is far more general. I imagine that the book chapter could be used, for example, in teaching metagenomics to students in bioinformatics (that’s at least a use I envision myself). Finally, apart from my own chapter, I can also highly recommend the chapter by Boulund et al. on statistical considerations for metagenomic data analysis (4). The book is available to buy from here, and the chapter can be read here.

References

- Nagarajan M (Ed.) Metagenomics: Perspectives, Methods, and Applications. ISBN: 9780081022689. Academic Press, Elsevier, USA (2018). doi: 10.1016/B978-0-08-102268-9 [Link]

- Bengtsson-Palme J: Strategies for Taxonomic and Functional Annotation of Metagenomes. In: Nagarajan M (Ed.) Metagenomics: Perspectives, Methods, and Applications, 55–79. Academic Press, Elsevier, USA (2018). doi: 10.1016/B978-0-08-102268-9.00003-3 [Link]

- Bengtsson-Palme J, Larsson DGJ, Kristiansson E: Using metagenomics to investigate human and environmental resistomes. Journal of Antimicrobial Chemotherapy, 72, 2690–2703 (2017). doi: 10.1093/jac/dkx199 [Paper link]

- Boulund F, Pereira MB, Jonsson V, Kristiansson E: Computational and Statistical Considerations in the Analysis of Metagenomic Data. In: Nagarajan M (Ed.) Metagenomics: Perspectives, Methods, and Applications, 81–102. Academic Press,, Elsevier, USA (2018). doi: 10.1016/B978-0-08-102268-9.00004-5 [Link]

Scientific Data – a way of getting credit for data

In an interesting development, Nature Publishing Group has launched a new initiative: Scientific Data – a online-only open access journal that publishes data sets without the demand of testing scientific hypotheses in connection to the data. That is, the data itself is seen as the valuable product, not any findings that might result from it. There is an immediate upside of this; large scientific data sets might be accessible to the research community in a way that enables proper credit for the sample collection effort. Since there is no demand for a full analysis of the data, the data itself might quicker be of use to others, without worrying that someone else might steal the bang of the data per se. I also see a possible downside, though. It would be easy to hold on to the data until you have analyzed it yourself, and then release it separately just about when you submit the paper on the analysis, generating extra papers and citation counts. I don’t know if this is necessarily bad, but it seems it could contribute to “publishing unit dilution”. Nevertheless, I believe that this is overall a good initiative, although how well it actually works will be up to us – the scientific community. Some info copied from the journal website:

Scientific Data’s main article-type is the Data Descriptor: peer-reviewed, scientific publications that provide an in-depth look at research datasets. Data Descriptors are a combination of traditional scientific publication content and structured information curated in-house, and are designed to maximize reuse and enable searching, linking and data mining. (…) Scientific Data aims to address the increasing need to make research data more available, citable, discoverable, interpretable, reusable and reproducible. We understand that wider data-sharing requires credit mechanisms that reward scientists for releasing their data, and peer evaluation mechanisms that account for data quality and ensure alignment with community standards.

A thought on peer review and responsibility

I read an interesting note today in Nature regarding the willingness to be review papers. The author of the note (Dan Graur) claims that scientists that publish many papers contribute less to peer review, and proposes a system in which “journals should ask senior authors to provide evidence of their contribution to peer review as a condition for considering their manuscripts.” I think that this is a very interesting thought, however I see other problems coming with it. Let us for example assume that a senior author is neglecting peer review not to be evil, but simply due to an already monumental workload. If we force peer review on such a person, what kind of reviews do we expect to get back? Will this person be able to fulfill a proper, high-quality, peer review assignment? I doubt it.

On the other hand, I don’t have a good alternative either. If no one wants to do the peer reviewing, that system will inevitably break down. However, I think that there would be better to encourage peer review with positive bonuses, rather than pressure – maybe faster handling times, and higher priority, of papers with authors who have done their share of peer reviewing the last two years? Maybe cheaper publishing costs? In any case, I welcome that the subject is brought up for debate, since it is immensely important for the way we perform science today. Thanks Dan!

Regarding ResearchGate and paper requests

I have recently started to receive requests for full-text versions of my publications on ResearchGate. That’s great, but I have yet to figure out how to send them over, without breaking any agreements. As I am in a somewhat intensive work-period at the moment, please forgive me for not spending time on ResearchGate right now. And if you would like full-text versions of my publications, please send me an e-mail! I’ll be glad to help!

Metagenomics and the Hype Cycle

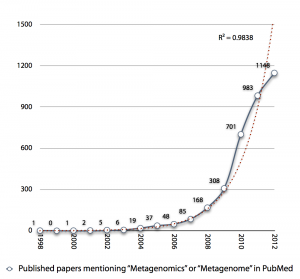

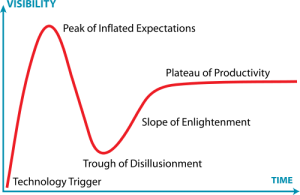

I was creating the diagram below an upcoming presentation, and I realized that the exponential growth in published metagenomics papers might be coming to an end. Interestingly enough the small drop in pace the recent years (701 -> 983 -> 1148) reminds me of the Hype Cycle, where we would (if my projection holds) have reached the “Peak of Inflated Expectations”, which means that we will see a rapid drop in the number of metagenomics publications in the next few years, as the field moves on.

The thought is interesting, but it seems a little bit early to draw any conclusions from the number of publications, yet. It is still kind of strange to note, though, that more than 20% of metagenomics publications (740/3547) are review papers. Come on, let’s do some science first and then review it… Anyway, it’ll be interesting to see what 2013 has in store for us.

The thought is interesting, but it seems a little bit early to draw any conclusions from the number of publications, yet. It is still kind of strange to note, though, that more than 20% of metagenomics publications (740/3547) are review papers. Come on, let’s do some science first and then review it… Anyway, it’ll be interesting to see what 2013 has in store for us.

Underpinning Wikipedia’s Wisdom

In December, Alex Bateman, whose opinions on open science I support and have touched upon earlier, wrote a short correspondence letter to Nature [1] in which he again repeated the points of his talk at FEBS last summer. He concludes by the paragraph:

Many in the scientific community will admit to using Wikipedia occasionally, yet few have contributed content. For society’s sake, scientists must overcome their reluctance to embrace this resource.

I agree with this statement. However, as I also touched upon earlier, but like to repeat again – bold statements doesn’t make dreams come true – action does. Rfam, and the collaboration with RNA Biology and Wikipedia is a great example of such actions. So what other actions may be necessary to get researchers to contribute to the Wikipedian wisdom?

First of all, I do not think that the main obstacle to get researchers to edit Wikipedia articles is reluctance to doing so because Wikipedia is “inconsistent with traditional academic scholarship”, though that might be a partial explanation. What I think is the major problem is the time-reward tradeoff. Given the focus on publishing peer-reviewed articles, the race for higher impact factor, and the general tendency of measuring science by statistical measures, it should be no surprise that Wikipedia editing is far down on most scientists to-do lists, so also on mine. The reward of editing a Wikipedia article is a good feeling in your stomach that you have benefitted society. Good stomach feelings will, however, feed my children just as little as freedom of speech. Still, both Wikipedia editing and freedom of speech are extremely important, especially as a scientist.

Thus, there is a great need of a system that:

- Provides a reward or acknowledgement for Wikipedia editing.

- Makes Wikipedia editing economically sustainable.

- Encourages publishing of Wikipedia articles, or contributions to existing ones as part of the scientific publishing process.

Such a system could include a “contribution factor” similar to the impact factor, in which contribution of Wikipedia and other open access forums was weighted, with or without a usefulness measure. Such a usefulness measure could easily be determined by links from other Wikipedia articles, or similar. I realise that there would be severe drawbacks of such a system, similar to those of the impact factor system. I am not a huge fan of impact factors (read e.g. Per Seglen’s 1997 BMJ article [2] for some reasons why), but I do not see that system changing any time soon, and thus some kind of contribution factor could provide an additional statistical measure for evaluators to consider when examining scientists’ work.

While a contribution factor would be an incitement for researchers to contribute to the common knowledge, it will still not provide an economic value to do so. This could easily be changed by allowing, and maybe even requiring, scientists to contribute to Wikipedia and other public fora of scientific information as part of their science outreach duties. In fact, this public outreach duty (“tredje uppgiften” in Swedish) is governed in Swedish law. In 2009, the universities in Sweden have been assigned to “collaborate with the society and inform about their operations, and act such that scientific results produced at the university benefits society” (my translation). It seems rational that Wikipedia editing would be part of that duty, as that is the place were many (most?) people find information online today. Consequently, it is only up to the universities to demand 30 minutes of Wikipedia editing per week/month from their employees. Note here that I am referring to paid editing.

Another way of increasing the economic appeal of writing Wikipedia articles would be to encourage funding agencies and foundations to demand Wikipedia articles or similar as part of project reports. This would require researchers to make their findings public in order to get further funding, a move that would greatly increase the importance of increasing the common wisdom treasure. However, I suspect that many funding agencies, as well as researchers would be reluctant to such a solution.

Lastly, as shown by the Rfam/RNA Biology/Wikipedia relationship, scientific publishing itself could be tied to Wikipedia editing. This process could be started by e.g. open access journals such as PLoS ONE, either by demanding short Wikipedia notes to get an article published, or by simply provide prioritised publishing of articles which also have an accompanying Wiki-article. As mentioned previously, these short Wikipedia notes would also go through a peer-review process along with the full article. By tying this to the contribution factor, further incitements could be provided to get scientific progress in the hands of the general public.

Now, all these ideas put a huge burden on already hard-working scientists. I realise that they cannot all be introduced simultaneously. Opening up publishing requires time and thought, and should be done in small steps. But doing so is in the interest of scientists, the general public and the funders, as well as politicians. Because in the long run it will be hard to argue that society should pay for science when scientists are reluctant to even provide the public with an understandable version of the results. Instead of digging such a hole for ourselves, we should adapt the reward, evaluation, funding and publishing systems in a way that they benefit both researchers and the society we often say we serve.

- Bateman and Logan. Time to underpin Wikipedia wisdom. Nature (2010) vol. 468 (7325) pp. 765

- Seglen. Why the impact factor of journals should not be used for evaluating research. BMJ (1997) vol. 314 (7079) pp. 498-502

Raising the bar for genome sequencing

In a recent Nature article (1), Craig Venter and his co-workers at JCVI has not only sequenced one marine bacterium, but 137 different isolates. Their main goal of this study was to better understand the ecology of marine picoplankton in the context of Global Ocean Sampling (GOS) data (2,3). As I see it, there are at least two really interesting things going on here:

First, this is a milestone in sequencing. Were not talking one genome – one article anymore. Were talking one article – 137 new genomes. This vastly raises the bar for any sequencing efforts in the future, but even more importantly, it shifts the focus even further from the actual sequencing to the purpose of the sequencing. One sequenced genome might be interesting enough if it fills a biological knowledge gap, but just sequencing a bacterial strain isn’t worth that much anymore. With the arrival of second- and third-generation sequencing techniques, this development was pretty obvious, but this article is (to my knowledge) the first real proof of that this has finally happened. I expect that five to ten years from now, not sequencing an organism of interest for your research will be viewed as very strange and backwards-looking. “Why didn’t you sequence this?” will be a highly relevant review question for many publications. But also the days when you could write “we here publish for the first time the complete genome sequence of <insert organism name here>” and have that as the central theme for an article will soon be over. Sequencing will simply be reduced to the (valuable) tool it actually is. Which is probably good, as it brings us back to biology again. Articles like this one, where you look at ~200 genomes to investigate ecological questions, are simply providing a more relevant biological perspective than staring at the sequence of one genome in a time when DNA-data is flooding over us.

Second, this is the first (again, to my knowledge) publication where questions arising from metagenomics (2,3,4) has initiated a huge sequencing effort to understand the ecology or the environment to which the metagenome is associated. This highlights a new use of metagenomics as a prospective technique, to mine various environments for interesting features, and then select a few of its inhabitants and look closer at who is responsible for what. With a number of emerging single cell sequencing and visualisation techniques (5,6,7,8) as well as the application of cell sorting approaches to environmental communities (5,9), we can expect metagenomics to play a huge role in organism, strain and protein discovery, but also in determining microbial ecosystem services. Though Venter’s latest article (1) is just a first step towards this new role for metagenomics, it’s a nice example of what (meta)genomics could look like towards the end of this decade, if even not sooner.

- Yooseph et al. Genomic and functional adaptation in surface ocean planktonic prokaryotes. Nature (2010) vol. 468 (7320) pp. 60-6

- Yooseph et al. The Sorcerer II Global Ocean Sampling expedition: expanding the universe of protein families. Plos Biol (2007) vol. 5 (3) pp. e16

- Rusch et al. The Sorcerer II Global Ocean Sampling expedition: northwest Atlantic through eastern tropical Pacific. Plos Biol (2007) vol. 5 (3) pp. e77

- Rusch et al. Characterization of Prochlorococcus clades from iron-depleted oceanic regions. Proceedings of the National Academy of Sciences of the United States of America (2010) pp.

- Woyke et al. Assembling the marine metagenome, one cell at a time. PLoS ONE (2009) vol. 4 (4) pp. e5299

- Woyke et al. One bacterial cell, one complete genome. PLoS ONE (2010) vol. 5 (4) pp. e10314

- Moraru et al. GeneFISH – an in situ technique for linking gene presence and cell identity in environmental microorganisms. Environ Microbiol (2010) pp.

- Lasken. Genomic DNA amplification by the multiple displacement amplification (MDA) method. Biochem Soc Trans (2009) vol. 37 (Pt 2) pp. 450-3

- Mary et al. Metaproteomic and metagenomic analyses of defined oceanic microbial populations using microwave cell fixation and flow cytometric sorting. FEMS microbiology ecology (2010) pp.

A future system for publications?

I listened to a great talk by Alex Bateman (one of the guys behind Pfam and Rfam, as well as involved in HMMER development) at FEBS yesterday. In addition to talking about the problems of increasing sequence amounts, Alex also provided some reflections on co-operativity and knowledge-sharing – not only among fellow researchers, but also to a wider audience. The starting point of this discussion is Rfam, where the annotation of RNA families is entirely based on a community-driven wiki, tightly integrated with Wikipedia. This means that to make a change in the Rfam annotation, the same change is also made at the corresponding Wikipedia page for this RNA family. And what’s the use of this? Well, as Alex says, for most of the keywords in molecular biology (and I would guess in all of science), the top hit on Google will be a Wikipedia entry. If not, the Wikipedia entry will be in the top ten list of hits, if a good Wiki page exists. This means that Wikipedia is the primary source of scientific information for the general public, as well as many scientists. Wikipedia – not scientific journals.

I listened to a great talk by Alex Bateman (one of the guys behind Pfam and Rfam, as well as involved in HMMER development) at FEBS yesterday. In addition to talking about the problems of increasing sequence amounts, Alex also provided some reflections on co-operativity and knowledge-sharing – not only among fellow researchers, but also to a wider audience. The starting point of this discussion is Rfam, where the annotation of RNA families is entirely based on a community-driven wiki, tightly integrated with Wikipedia. This means that to make a change in the Rfam annotation, the same change is also made at the corresponding Wikipedia page for this RNA family. And what’s the use of this? Well, as Alex says, for most of the keywords in molecular biology (and I would guess in all of science), the top hit on Google will be a Wikipedia entry. If not, the Wikipedia entry will be in the top ten list of hits, if a good Wiki page exists. This means that Wikipedia is the primary source of scientific information for the general public, as well as many scientists. Wikipedia – not scientific journals.

The consequence of this is that to communicate your research subject, you should contribute to its Wikipedia page. In fact, Bateman argues, we have a responsibility as scientists to provide accurate and correct information to the public through the best sources available, which in most cases would be Wikipedia. To put this in perspective (and here I once again borrow Alex’ words), if somebody told you ten years ago that there would be one single internet site that everybody would visit to find scientific information, and where discussion and continuous improvement would be allowed, encouraged and performed, most people would have said that was too good to be true. But that’s what Wikipedia offers. It is time to get rid of the Wiki-sceptisism, and start improving it.

And so, what about the future of publishing? Bateman has worked hard to form an agreement with the journal RNA Biology to integrate the publishing into the process of adding to the easily accessible public information. To have an article on a new RNA family published under the journal’s RNA families track, the family must not only be submitted to the Rfam database, but the authors must also provide a Wikipedia formatted article, which undergo the same peer-review process as the journal article. This ensures high-quality Wikipedia material, as well as making new scientific discoveries public.

I don’t think there’s a long stretch to guess that in the future, more journals and/or funding agencies will take on similar approaches, as researchers and decision-makers discover the importance of correct, publicly available information. The scientific world is slowly moving towards being more open, also for non-scientists. This openness is of extremely high importance in these times of climate scepticism, GMO controversy, extinction of species, and nuclear power debate. For the public to make proper decisions and send a clear message to the politicians, scientists need to be much better at communicating the current state of knowledge, or what many people prefer to call “truth”.