Published paper: Guidelines for DNA quality checking

I have co-authored a paper together with, among others, Henrik Nilsson that was published today in MycoKeys. The paper deals with checking quality of DNA sequences prior to using them for research purposes. In our opinion, a lot of the software available for sequence quality management is rather complex and resource intensive. Not everyone have the skills to master such software, and in addition computational resources might also be scarce. Luckily, there’s a lot that can be done in quality control of DNA sequences just using manual means and a web browser. This paper puts these means together into one comprehensible and easy-to-digest document. Our targeted audience is primaily biologists who do not have a strong background in computer science, and still have a dataset requiring DNA sequence quality control.

We have chosen to focus on the fungal ITS barcoding region, but the guidelines should be pretty general and applicable to most groups of organisms. In very short our five guidelines spells:

- Establish that the sequences come from the intended gene or marker

Can be done using a multiple alignment of the sequences and verifying that they all feature some suitable, conserved sub-region (the 5.8S gene in the ITS case) - Establish that all sequences are given in the correct (5’ to 3’) orientation

Examine the alignment for any sequences that do not align at all to the others; re-orient these; re-run the alignment step; and examine them again - Establish that there are no (at least bad cases of) chimeras in the dataset

Run the sequences through BLAST in one of the large sequence databases, e.g. at NCBI (or in the ITS case, use the UNITE database), to verify that the best match comprises more or less the full length of the query sequences - Establish that there are no other major technical errors in the sequences

Examine the BLAST results carefully, particularly the graphical overview and the pairwise alignment, for anomalies (there are some nice figures in the paper on how it should and should not look like) - Establish that any taxonomic annotations given to the sequences make sense

Examine the BLAST hit list to see that the species names produced make sense

A much more thorough description of these guidelines can be found in the paper itself, which is available under open access from MycoKeys. There’s simply no reason not to go there and at least take a look at it. Happy quality control!

Reference

Nilsson RH, Tedersoo L, Abarenkov K, Ryberg M, Kristiansson E, Hartmann M, Schoch CL, Nylander JAA, Bergsten J, Porter TM, Jumpponen A, Vaishampayan P, Ovaskainen O, Hallenberg N, Bengtsson-Palme J, Eriksson KM, Larsson K-H, Larsson E, Kõljalg U: Five simple guidelines for establishing basic authenticity and reliability of newly generated fungal ITS sequences. MycoKeys. Issue 4 (2012), 37–63. doi: 10.3897/mycokeys.4.3606 [Paper link]

Improving Swedish research – is there a need for a research elite?

I know that this is not supposed to be a political page, but writing this up, I realized that there is no way I can keep my political views entirely out of this post. So just a quick warning, the following text contains political opinions and is a reflection of my views and believes rather than well supported facts.

So, Swedish minister for education Jan Björklund has announced the government’s plan to spend 3 billion SEK (~350 million EUR, ~450 million USD) on “elite” researchers over the next ten years. One main reason to do so is to strengthen Swedish research in competition with American universities, and to be able to recruit top researchers from other countries to Sweden. While I welcome the prospect of more money to research, I have to say I am very skeptical about the nature of how this money is distributed. First of all, giving more money to the researchers that have already succeeded (I guess this is how you would define elite researchers – if someone has a better idea, please tell both me and Jan Björklund), is not going to generate more innovative research – just more of the same (or similar) things to what these researchers already do. If the government is serious about that Swedish research has a lower-than-expected output (which is a questionable statement in itself), the best way of increasing that output would be to give more researchers the opportunity to put their ideas into action. Second, a huge problem for research in Sweden is that a lot of the scientists’ time is spent on doing other stuff – writing grant applications, administering courses, filling in forms etc. Therefore, one way of improving research would be to put more money into funding at the university administration level, so that researchers actually have time to do what they are supposed to do. I will now provide my own four-point program for how I think that Sweden should move forward to improve the output of science.

1. Researchers need more time

My first point is that researchers need more time to do what they are supposed to do – science. This means that they cannot be expected to apply for money from six different research foundations every year, just to receive a very small amount of money that will keep them from getting thrown out for another 8 months. The short-term contracts that are currently the norm in Sweden create a system where way too much time is spent on writing grant applications – the majority of which will not succeed. In addition, researchers are often expected to be their own secretary, as well as organizing courses (not only lecturing). To solve this we need:

- Longer contracts for scientists. A grant should be large enough to secure five years of salary, plus equipment costs. This allows for some time to actually get the science done, not just the time to write the next application.

- Grants that come with a guaranteed five-year extension of grants to projects that have fulfilled their goals in the first five years. This further secures longevity of researchers and their projects. Also, this allows for universities to actually employ scientists instead of the current system which is all about trying to work around the employment rules.

- More money to university administration. It is simple more cost efficient to have a secretary handling non-science related stuff in the department or group, as well as economic people handling the economy. The current system expects every researcher to be a jack of all trades – which efficiently reduces one to a master of none. More money to administration means more time spent on research.

2. Broad funding creates a foundation for success

Another problem is that if only a few projects are funded repeatedly, the success of Swedish research is very much bound to the success of these projects. While large-scale and high-cost projects are definitely needed, there is also a need to invest in a variety of projects. Many applied ideas have originated from very non-applied research, and the applied research need fundamental research to be done to be able to move forward. However, in the shortsighted governmental view of science, the output has to be almost immediate, which means that applied projects are much more likely to be funded. Thus, projects that could do fundamental discoveries, but are more complicated and take longer time will be down-prioritized by both researchers and universities. To further make situation worse, Björklund et al. have promised more money to universities that cut out non-productive research, with almost guarantees that any projects with a ten-year timeframe will not even be started.

If we are serious about making Swedish research successful, we need to do exactly the opposite. Fund a lot of different projects, both applied and fundamental, regardless of their short-term value. Because the ideas that are most likely to produce short-term results are probably also the ones that are the least innovative in the long-term. Consequently, we need to:

- Spend research funding on a variety of projects, both of fundamental and applied nature.

- Secure funding for “crazy” projects that span long periods of time, at least five to ten years.

3. If we don’t dare to fail, we will not have a chance to win

Finally, research funding must become better at taking risks. If we only bet our money on the most successful researchers, there is absolutely no chance for young scientists to get funded, unless of course they have been picked up by one of the right supervisors. This means that the same ideas get disseminated through the system over and over again, at the expense of more innovative ideas that could pop up in groups with less money to realize them. If these untested ideas in smaller groups get funded, some of them might undoubtedly fail to produce research of high societal value. But some of them will likely develop entirely new ideas, which in the long term might be much more fruitful than throwing money on the same groups over and over again. Suggestions:

- Spend research funding broadly and with an active risk-gain management strategy.

- Allow for fundamental research to investigate completely new concepts – even if they are previously untested, and regardless (or less dependent on) previous research output.

- Invest in infrastructure for innovative research – and do so fast. For example, the money spent on the sequencing facilities at Sci Life Lab in Stockholm is an excellent example of an infrastructure investment that gains a lot of researchers at different universities access to high-throughput sequencing, without each university having to invest in expensive sequencing platforms themselves. More such centers would both spur collaboration and allow for faster adoption of new technologies.

4. Competing with what we are best at

A mistake that is often done when trying to compete with those that are best in the class is to try to compete by doing the same things as the best players do. This makes it extremely hard to win a game against exactly those players, as they are likely more experienced, have more resources, and already has the attention to get the resources we compete for. Instead, one could try to play the Wayne Gretzky trick: to try to skate where the puck is heading, instead of where it is today. Another approach would be to invent a new arena for the puck to land in, where you have better control over the settings than your competitors (slightly similar to what Apple did when the iPod was released, and Microsoft couldn’t use Windows to leverage their mp3-player Zune).

For Sweden, this would mean that we should not throw some bucks at the best players at our universities and hope that they will be happy with this (comparably small) amount of money. Instead, we should give them circumstances to work under that are much better or appealing from other standpoints. This could be better job security, longer contracts, less administrative work, securer grants, more freedom to decide over ones time, and larger possibilities to combine work and family. Simply creating a better, securer and nicer environment to work in. However, Björklund’s suggestions go the very opposite way: researchers should compete to be part of the elite community, and if your not in that group, you’d get thrown out. Therefore, I suggest (with the risk of repeating myself) that we should compete by:

- Offering longer contracts and grants for scientists.

- Giving scientists opportunities to combine work and family life.

- Embracing all kinds of science, both fundamental and applied, both short-term and long-term.

- Allowing researchers to take risks, even if they fail.

- Giving universities enough funding to let scientists do the science and administrative personal do the administration.

- Funding large-scale collaborative infrastructure investments.

- Thinking of how to create an environment that is appealing for scientists, not only from an economic perspective.

A note on other important aspects of funding

Finally, I have now been focusing a lot on width as opposed to directed funding to an elite research squad. It is, however, apparent that we also need to allocate funding to bring in more women to the top positions in the academy. Likely, a system which favors elite groups will also favor male researchers, judging from how the Swedish Foundation for Strategic Research picks their bets for the future. Also, it is important that young researchers without strong track records gets funded, otherwise a lot of new and interesting ideas risk to be lost.

In the fourth point of my proposal, I suggest that Sweden should compete at what Sweden is good at, that is to view researchers as human beings, which are most likely to succeed in an environment where they can develop their ideas in a free and secure way. For me, it is surprising that a minister of education representing a liberal party wants to excess such control over what is good and bad research. Putting up a working social security system around science seems much more logical than throwing money at those who already have. Apparently I have forgotten that our current government is not interested in having a working social security system – their interest seem to lie in deconstructing the very same structures.

Megraft paper in print

I just learned from Research in Microbiology that the paper on our software Megraft has now been assigned a volume and an issue. The proper way of referencing Megraft should consequently now be:

Bengtsson J, Hartmann M, Unterseher M, Vaishampayan P, Abarenkov K, Durso L, Bik EM, Garey JR, Eriksson KM, Nilsson RH: Megraft: A software package to graft ribosomal small subunit (16S/18S) fragments onto full-length sequences for accurate species richness and sequencing depth analysis in pyrosequencing-length metagenomes. Research in Microbiology. Volume 163, Issues 6–7 (2012), 407–412, doi: 10.1016/j.resmic.2012.07.001. [Paper link]

Megraft is currently at version 1.0.1, but I have a slightly updated version in the pipeline which will be made available later this fall.

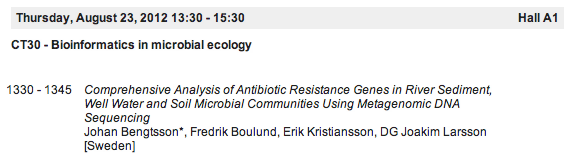

My ISME Program for Thursday and Friday

So I finally have finalized my own program for what I’m going to see at ISME today and tomorrow. As the last time, the things I think I will actually attend have bold times. Generally, thursday is easy – I will be at the Bioinformatics session, where I will also present myself (at 13.30 in Hall A1). Friday is a little bit trickier, but considerably less complicated to choose from than tuesday was. See you around, and don’t forget to visit my poster (board 002A)!

Thursday

Morning session

08:30 – 09:20 From omics to the environment and back: unraveling how chemosynthetic symbionts gain energy and carbon

Nicole Dubilier, Symbiosis Group, Max Planck Institute of Marine Microbiology, Bremen, Germany (Hall A1)

1000 – 1030 Bayesian hierarchical models for defining enterotypes and ecotypes

Christopher Quince, University of Glasgow, UK (Hall A1)

1000 – 1030 Composition, structure and function of hot spring cyanobacterial mat communities: use of high-throughput technologies and theory to demarcate guilds, species and the functions they catalyze

Dave Ward, Montana State University, Bozeman, USA (Hall A2)

1000 – 1030 Stability and Resilience in the Human Microbiome

David Relman, Stanford University, USA (Auditorium 11)

1030 – 1100 From the HMP to the EMP: deriving insight from large-scale sequencing projects

Rob Knight, University of Colorado, USA (Hall A1)

1100 – 1130 Phylogenetic conservatism of functional traits in microorganisms

Adam Martiny, University of California, Irvine, USA (Hall A1)

1130 – 1200 Title to be confirmed

Jeroen Raes, VIB, Belgium (Hall A1)

1130 – 1200 Feast then famine: Fe bioavailability and utilization through geological time

Christopher Dupont, J. Craig Venter Institute, USA (Auditorium 15)

Lunch session

12:30 – 13:15 Tackling the pitfalls and unveiling the promise of soil metagenomics

Janet Jansson, Earth Sciences Division, Lawrence Berkeley, National Laboratory, Berkeley, CA, USA (Hall A1)

Afternoon session

1330 – 1345 Comprehensive Analysis of Antibiotic Resistance Genes in River Sediment, Well Water and Soil Microbial Communities Using Metagenomic DNA Sequencing

Johan Bengtsson [Sweden] (Hall A1)

1330 – 1345 Genome-wide diversification patterns of fresh and saltwater bacteria of the SAR11 clade inferred from single cell and metagenomics data

Siv Andersson [Sweden] (Auditorium 15)

1330 – 1345 Spatial and functional organization of deep-water microbial communities in the ocean: role of particle attachment

Gerhard J. Herndl [Austria] (Session Room B4)

1345 – 1400 Investigating diversified gene functions in microbial communities of the human gut

Lina Faller [USA] (Hall A1)

1400 – 1415 Large-scale characterization of the diversity and community composition of human intestinal microbiota

Leo Lahti [Netherlands] (Hall A1)

1400 – 1415 Significant and persistent impact of timber harvesting on soil microbial communities in northern coniferous forests

Martin Hartmann [Switzerland] (Hall A3)

1400 – 1415 From communities to single cells: finding functional coherence in the lake microbiome

Stefan Bertilsson [Sweden] (Session Room B4)

1415 – 1430 Deciphering the microbial community and the lignocellulolytic digestome of lower termite Coptotermes gestroi

João Paulo Franco Cairo [Brazil] (Hall A1)

1415 – 1430 Polyextremotolerant human opportunistic black yeasts inhabit dishwashers around the world

Nina Gunde-Cimerman [Slovenia] (Hall A2)

1415 – 1430 Dating the cyanobacterial origin of the chloroplast

Luisa Falcon [Mexico] (Auditorium 15)

1430 – 1445 Linking genotypes to phenotypes: characterizing viral proteins of unknown function

Jeremy Frank [USA] (Hall A1)

1430 – 1445 Living together apart: soil metagenomics and microbially relevant scales

George A. Kowalchuk [Netherlands] (Session Room B4)

1445 – 1500 The metagenomics of the dead: taxonomic and functional annotation methods for analysis of ancient datasets

Katarzyna Zaremba-Niedzwiedzka [Sweden] (Hall A1)

1445 – 1500 Ecological drivers of bacterial social evolution

Ines Mandic Mulec [Slovenia] (Auditorium 15)

1500 – 1515 Dirty little secrets for soil metagenomic assembly

Adina Howe [USA] (Hall A1)

1500 – 1515 Antibiotic resistance associated with waste water treatment plant effluent

Gregory Amos [United Kingdom] (Session Room B3)

1515 – 1530 Application of Unifrac and related bioinformatic tools to assess intra-species diversity within oral microbiomes in an anthropological context

Hans-Peter Horz [Germany] (Hall A1)

1515 – 1530 Comparative genomics of the ubiquitous, hydrocarbon degrading genus Marinobacter

Esther Singer [USA] (Auditorium 15)

Friday

Morning session

1000 – 1030 The fate of pesticides in soil and aquifers from a small-scale point of view: Does microbial and spatial heterogeneity have an impact?

Jens Aamand, The Geological Survey of Denmark and Greenland (GEUS), Denmark (Hall A2)

1000 – 1030 Commonness and rarity: Core and satellite species groups in microbial metacommunities

Christopher van der Gast, NERC Centre for Ecology & Hydrology, Wallingford, UK (Auditorium 11)

1100 – 1130 Spatial heterogeneity at the microbial scale: effects on microbial community structure and functioning

Naoise Nunan, BioEMCo, Centre INRA Versailles-Grignon, France (Hall A2)

1100 – 1130 Signalling controlled structure function of activated sludge microbial communities

Staffan Kjelleberg, University of New South Wales, Australia (Auditorum 15)

1130 – 1200 The impact of soil spatial heterogeneity on concepts of microbial interactions and diversity

James I. Prosser, University of Aberdeen, UK (Hall A2)

1130 – 1200 Micro-scale spatial expansion of microbial cells and mobile genetic elements

Barth Smets, Technical University of Denmark, Denmark (Auditorium 11)

Afternoon session

1400 – 1415 Extracellular vesicles from the marine cyanobacterium Prochlorococcus may mediate diverse community interactions

Steven Biller [USA] (Hall A1)

1415 – 1430 Simultaneous amplicon sequencing to explore co-occurrence patterns of bacterial, archaeal and eukaryotic components of rumen microbial communities

Sandra Kittelmann [New Zealand] (Session Room B4)

1430 – 1445 The enterotypes of great ape gut microbiomes

Andrew Moeller [USA] (Session Room B4)

1445 – 1500 Theoretical models for bacterial communities in drinking water as they travel and evolve through drinking water distribution systems

Joanna Schroeder [United Kingdom] (Auditorium 11)

1500 – 1515 Wastewater treatment plants from across the globe have a reproducible core microbial community

Aaron Marc Saunders Denmark] (Session Room B4)

1530 – 1545 Every cloud has a silver lining: invasion by Limnohabitans planktonicus promotes the maintenance of diversity in bacterial communities

Gianluca Corno [Italy] (Hall A1)

1530 – 1545 Metabolic flexibility as a major predictor of spatial distribution in microbial communities

Kevin Purdy [United Kingdom] (Auditorium 15)

1545 – 1600 Microbial dynamics in a thawing world: linking microbial communities to increased methane flux in degrading permafrost

Gene Tyson [Australia] (Auditorium 15)

My ISME Program Monday and Tuesday

I have tried to put together a list of talks I find interesting at ISME14 next week (oh, well going through the program book I find virtually all talks interesting, but some of them more so than others). I didn’t get there quite yet, but I have a list for monday and tuesday. I have realized that monday morning will likely run smoothly (a tough call between von Mering and Gilbert at 11.30, but otherwise the choices were not too hard). Monday afternoon I will probably spend in Session Room B3, where the Microbial Community Diversity: 16S and Beyond session will take place (many sessions have really silly, non-informative, names at this conference – what would not fit into the concept “16S and beyond“?). I will really miss the talk by Otto X. Cordero on bacteria act as socially cohesive units and antibiotic resistance, but I simply don’t think there will be time for a trip to Hall A2 and back just for that talk.

Another tough call is the round table discussions on Monday evening. Here, however, I feel that I have a duty to attend to the Unraveling the bacterial mobilome: Potentials and limitations of the present methodology session in Auditorium 11, which also might be the most relevant one for my current research projects.

Below you find my list of interesting talks at ISME14, sorted by time. Times in bold are the ones I will aim to attend in case I have to make a choice. Also, of course I will attend my own poster presentation on Monday afternoon (poster board number 267A). Hope to see you there!

Monday

Morning session

1000 – 1030 Cross-biome comparisons of soil microbial communities and their functional potentials

Noah Fierer, University of Colorado at Boulder, USA (Hall A2)

1030 – 1100 Experimental biogeography of bacteria in miniature ecosystems

Thomas Bell, Imperial College, London, UK (Hall A2)

1130 – 1200 Tracking OTUs around the environment – a challenge for clustering algorithms and interpretation

Christian von Mering, University of Zurich, Switzerland (Hall A1)

1130 – 1200 The Earth Microbiome Project: A new paradigm in geospatial and temporalstudies of microbial ecology

Jack A. Gilbert, Argonne National Laboratory and University of Chicago, USA (Hall A2)

Afternoon session

1330 – 1345 What makes a bacterium fresh? Genome wide functional comparison of marine and freshwater SAR11

Alexander Eiler [Sweden] (Auditorium 15)

1330 – 1345 Rarity and the problem of measuring diversity

Bart Haegeman [France] (Session Room B3)

1345 – 1400 Complexity does not necessarily create diversity

Tom Curtis [United Kingdom] (Session Room B3)

1345 – 1400 Depletion of the rare bacterial biosphere in expanding oceanic oxygen minimum zones

J. Michael Beman [USA] (Session Room B4)

1400 – 1415 Selection history affects the predictability of microbial ecosystem development

Andrew Free [United Kingdom] (Session Room B3)

1415 – 1430 Can we use microbial life strategies to understand the response of microbial communities to moisture stress?

Sarah Evans [USA] (Session Room B3)

1430 – 1445 Towards a unified taxonomy for ribosomal RNA databases

Pelin Yilmaz [Germany] (Session Room B3)

1445 – 1500 Systematic design of 18S rDNA primers for assessing eukaryotic diversity

Luisa Hugerth [Sweden] (Session Room B3)

1445 – 1500 Either Of yeast, grapes and wasps: Saccharomyces cerevisiae ecology revised

Irene Stefanini [Italy] (Hall A1)

1500 – 1515 Soil bacterial biogeography: a taxonomic and functional perspective

Rob Griffiths [United Kingdom] (Hall A1)

1500 – 1515 Ecological populations of bacteria act as socially cohesive units of antibiotic production and resistance in the wild

Otto X. Cordero [USA] (Hall A2)

1515 – 1530 Micro-scale drivers of bacterial diversity and biogeography

George Kowalchuk [Netherlands] (Hall A1)

1515 – 1530 Time and space resolved deep metagenomics to investigate selection pressures on low abundant species in complex environments

Mads Albertsen [Denmark] (Session Room B3)

1515 – 1530 Bacterial community structure and composition in high arsenic contaminated ground water from West Bengal and microbial role in subsurface arsenic release

Pinaki Sar [India] (Auditorium 10)

Evening session (round-table discussions)

17:30 – 19:30 RT11: Microbial Network Ecology: Deciphering Complex Network Interactions in Microbial Communities (Hall A3)

17:30 – 19:30 RT16: Unraveling the bacterial mobilome: Potentials and limitations of the present methodology (Auditorium 11)

17:30 – 19:30 RT17: Microbial invasions: What defines whether a microorganism is an invasive species? (Auditorium 12)

Tuesday

Morning session

08:30 – 09:20 You are what you eat but not always what omics predicts: On the importance of single cell ecophysiology of microbes

Michael Wagner, Department of Microbial Ecology, University of Vienna, Austria (Hall A1)

1000 – 1030 Can dormancy theory help us retrieve rare and uncultured microbes?

Jay Lennon, Indiana University, USA (Hall A3)

1000 – 1030 A critical review of mean metabolic rates of subsurface microbial communities

Bo Barker Jørgensen, University of Aarhus, Denmark (Auditorum 11)

1000 – 1030 Horizontal genetic transfer and the origin of species in bacteria

Frederick M. Cohan, Wesleyan University, USA (Auditorum 12)

1030 – 1100 Phylogenomic networks reveal mechanisms for lateral gene transfer during microbial evolution

Tal Dagan, Heinrich Heine University Düsseldorf, Germany (Auditorum 12)

1100 – 1130 Coevolution between plasmids and their hosts: consequences for the persistence of drug resistance

Eva Top, University of Idaho, USA (Auditorum 12)

1130 – 1200 The communal gene pool in natural environments

Søren J. Sørensen, University of Copenhagen, Denmark (Auditorum 12)

1130 – 1200 Let genomes do the talking: Using genomics to unravel genes under selection in archaeal populations

Rachel Whitaker, University of Illinois, USA (Auditorum 15)

Afternoon session

1330 – 1345 Spatial patterns of microbial communities at a soil microscale

Florentin Constancias [France] (Hall A2)

1330 – 1345 Microbial biofilm biodiversity distribution in a stream network

Katharina Besemer [Austria] (Session Room B3)

1330 – 1345 Effects of loss of rare microbes on soil ecosystem services

Gera Hol [Netherlands] (Auditorium 10)

1345 – 1400 Targeted recovery of novel phylogenetic diversity from next-generation sequence data

Josh D. Neufeld [Canada] (Auditorium 10)

1345 – 1400 Functionally relevant microdiversity of Nitrospira-like bacteria in activated sludge

Christiane Dorninger [Austria] (Hall A3)

1345 – 1400 Hot spot of horizontal gene transfer: high abundance and diversity of mobile genetic elements in bacterial communities of on-farm pesticide biopurification systems

Kornelia Smalla [Germany] (Auditorium 12)

1400 – 1415 Transcriptional dynamics of catabolic genes in soil – fine-scale analysis for a deeper understanding of soil functioning

Mette H Nicolaisen [Denmark] (Hall A1)

1400 – 1415 Seasonal synchronicity and specificity of microbial community and population dynamics across four alpine lakes

Ryan Mueller [Spain] (Session Room B3)

1400 – 1415 Mapping genotypic diversity onto niche adaptation

Yutaka Yawata [USA] (Auditorium 12)

1415 – 1430 Soil bacterial community shift after chitin enrichment: an integrative metagenomic approach

Samuel Jacquiod [France] (Hall A3)

1415 – 1430 MetaGenomic Species: adding structure to metagenomics data

H. Bjørn Nielsen [Denmark] (Auditorium 12)

1430 – 1445 Impact of diet on human gut microbial communities

Cindy Nakatsu [USA] (Hall A3)

1430 – 1445 Insights into the bovine rumen plasmidome

Itzhak Mizrahi [Israel] (Auditorium 12)

1445 – 1500 Mechanisms of biocide-induced antibiotic resistance: from single cell to community

Seungdae Oh [USA] (Hall A3)

1445 – 1500 Antibiotic resistance in soil bacterial communities: comparison of metals and antibiotic residues as selecting agents and spatial heterogeneity of coselected resistance patterns

Kristian Koefoed Brandt [Denmark] (Hall A2)

1445 – 1500 Viruses, a controlling force on Prokaryotic diversity?

Ruth-Anne Sandaa [Norway] (Session Room B4)

1445 – 1500 Regulation of transfer of the ICEclc element of Pseudomonas

Jan Roelof van der Meer [Switzerland] (Auditorium 12)

1500 – 1515 Pathogen removal in slow sand filters as revealed by stable isotope probing coupled with next generation sequencing

Sarah Haig [United Kingdom] (Hall A3)

1500 – 1515 Development of zinc tolerance by the ammonia oxidising community is restricted to ammonia oxidising Bacteria, rather than Archaea

Stefan Ruyters [Belgium] (Hall A2)

1500 – 1515 Survival strategies of plasmids in chemostats and biofilms: effect of hostrange and competition

Jan-Ulrich Kreft [United Kingdom] (Auditorium 12)

1515 – 1530 Evaluating process-related and seasonal changes in bacterial community in drinking water treatment and distribution systems

Ameet Pinto [USA] (Hall A3)

1515 – 1530 Distribution, diversity, and evolution of cyclic peptide secondary metabolites in natural populations of marine picocyanobacteria

Andres Cubillos-Ruiz [USA] (Hall A1)

1515 – 1530 Permissiveness of soil microbial communities toward receipt of mobile genetic elements

Sanin Musovic [Denmark] (Auditorium 12)

Evening session

1830 Víctor de Lorenzo, Molecular Environmental Microbiology Laboratory, the Spanish National Research Council, Spain

Conflict management and division of labor in bacterial populations degrading recacitrant aromatics

1915 Stephen J. Giovannoni, Oregon State University, USA

Outliers: Extreme Selection for Minimalism in Ocean Microbial Plankton

ISME14 begins today

I am on my way to Copenhagen for the ISME14 conference that begins today. I’m myself quite excited about this event, and will present three posters (two as first author), and give a short talk on antibiotic resistance gene identification and metagenomics. My talk will be in the Bioinformatics in Microbial Ecology session on Thursday afternoon (at 13.30).

If you’d like to talk about Metaxa and Megraft, I will present an SSU-oriented poster in the Monday afternoon poster section (board number 267A). My antibiotic resistance gene poster will be presented on Thursday afternoon (board number 002A), and I really encourage everyone interested in metagenomics (especially metagenomic assembly) to come talk to me then! Finally, I am also partially responsible for a poster on periphyton metagenomics with Martin Eriksson as its main author. This poster is also presented on Monday, in the Microbial Dispersion and Biogeography session (board number 021A).

If you’d like to talk about Metaxa and Megraft, I will present an SSU-oriented poster in the Monday afternoon poster section (board number 267A). My antibiotic resistance gene poster will be presented on Thursday afternoon (board number 002A), and I really encourage everyone interested in metagenomics (especially metagenomic assembly) to come talk to me then! Finally, I am also partially responsible for a poster on periphyton metagenomics with Martin Eriksson as its main author. This poster is also presented on Monday, in the Microbial Dispersion and Biogeography session (board number 021A).

I hope to be able to make another post later tonight on what are the “essential” sessions for me on this conference. Hope to see you there soon!

I am married

As many of you probably know, I have gotten married, which of course is a huge step for me. In short, I think the main way it will affect my scientific life is that I am changing my surname. So from now on, I am going to publish under the name “Johan Bengtsson-Palme” instead of just “Johan Bengtsson”. Not that much of a change, but it could still be nice to know that Bengtsson-Palme J, and Bengtsson J might very well be the same person.

As many of you probably know, I have gotten married, which of course is a huge step for me. In short, I think the main way it will affect my scientific life is that I am changing my surname. So from now on, I am going to publish under the name “Johan Bengtsson-Palme” instead of just “Johan Bengtsson”. Not that much of a change, but it could still be nice to know that Bengtsson-Palme J, and Bengtsson J might very well be the same person.

On a side note, we just recently got a paper accepted on guidelines for quality control of ITS barcode sequences. More on that to follow soon.

New paper accepted: Megraft

Yesterday, our paper on Megraft – a software tool to graft ribosomal small subunit (16S/18S) fragments onto full-length SSU sequences – became available as an accepted online early article in Research in Microbiology. Megraft is built upon the notion that when examining the depth of a community sequencing effort, researchers often use rarefaction analysis of the ribosomal small subunit (SSU/16S/18S) gene in a metagenome. However, the SSU sequences in metagenomic libraries generally are present as fragmentary, non-overlapping entries, which poses a great problem for this analysis. Megraft aims to remedy this problem by grafting the input SSU fragments from the metagenome (obtained by e.g. Metaxa) onto full-length SSU sequences. The software also uses a variability model which accounts for observed and unobserved variability. This way, Megraft enables accurate assessment of species richness and sequencing depth in metagenomic datasets.

The algorithm, efficiency and accuracy of Megraft is thoroughly described in the paper. It should be noted that this is not a panacea for species richness estimates in metagenomics, but it is a huge step forward over existing approaches. Megraft shares some similarities with EMIRGE (Miller et al., 2011), which is a software package for reconstruction of full-length ribosomal genes from paired-end Illumina sequences. Megraft, however, is set apart in that it has a strong focus on rarefaction, and functions also when the number of sequences is small, which is often the case in 454 and Sanger-based metagenomics studies. Thus, EMIRGE and Megraft seek to solve a roughly similar problem, but for different sequencing technologies and sequencing scales.

Megraft is available for download here, and the paper can be read here.

-

Bengtsson, J., Hartmann, M., Unterseher, M., Vaishampayan, P., Abarenkov, K., Durso, L., Bik, E.M., Garey, J.R., Eriksson, K.M., Nilsson R.H. (2012). Megraft: A software package to graftribosomal small subunit (16S/18S) fragments onto full-length sequences for accurate species richness and sequencing depth analysis in pyrosequencing-length metagenomes and similar environmental datasets. Research in Microbiology, doi: 10.1016/j.resmic.2012.07.001.

- Miller, C. S., Baker, B. J., Thomas, B. C., Singer, S. W., & Banfield, J. F. (2011). EMIRGE: reconstruction of full-length ribosomal genes from microbial community short read sequencing data. Genome Biology, 12(5), R44. doi:10.1186/gb-2011-12-5-r44

One more thing…

I realized that I have been using a newer version of Metaxa than most of you for the last couple of months. This bug fix was written sometime in February or March, and we have kept it internal to make sure it works as it should. Then other things came across and we never got around to actually release it. But with testing passed and upcoming versions of Metaxa in the pipeline, I think it is about time that everyone gets their hands on the latest Metaxa version.

It’s only two small things this time:

- Slight tweaks to the new HMM scoring system, making Metaxa just a little bit faster

- Fixed a rarely occurring bug causing the –heuristics options to be ignored in certain circumstances

Metaxa and Illumina data

For the last months I have been (part time) struggling with getting Metaxa to eat Illumina paired-end data. This is a pretty tricky task, mainly due to the fact that Illumina reads are so much shorter than those obtained by Sanger and 454 sequencing. Therefore, I am more than happy to inform the community that today (the day before I go on vacation) I have a working prototype up and running. In fact, calling it a prototype is unfair, it is a quite far gone piece of software by now. Currently, I am running it on test data sets, and I will try to keep it running over the next couple of weeks. Thereafter, I hope to be able to release it sometime this autumn (but don’t expect a September release!), harnessing the power of Illumina sequencing for SSU identification. Stayed tuned, and have a great summer!